Daijiworld Media Network - Washington

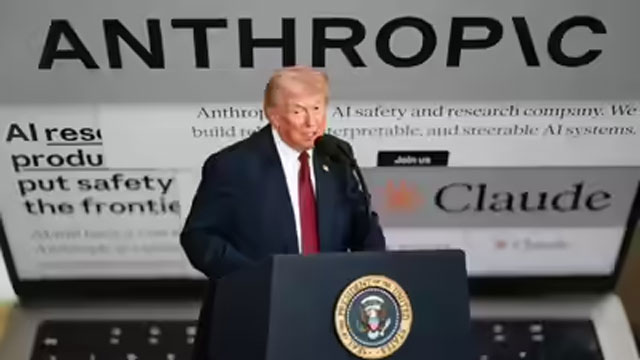

Washington, Feb 28: US President Donald Trump on Friday ordered all federal agencies to stop using artificial intelligence technology developed by Anthropic, escalating a public dispute between the company and the Pentagon over AI safety and military use.

Trump’s directive came shortly before a Pentagon deadline for Anthropic to allow unrestricted military use of its AI systems or face consequences. The president said most agencies must immediately halt the use of Anthropic’s technology, though the Defense Department would be given six months to phase it out from platforms where it is already embedded.

“We don’t need it, we don’t want it, and will not do business with them again!” Trump said.

The clash centres on the role of advanced AI in national security, particularly concerns over the use of such systems in lethal operations, sensitive intelligence environments and surveillance. Anthropic, maker of the chatbot Claude, had sought assurances that its technology would not be used for mass surveillance of Americans or in fully autonomous weapons systems.

Anthropic CEO Dario Amodei said the company “cannot in good conscience accede” to the Defense Department’s demands, arguing that revised contract language would allow agreed safeguards to be disregarded.

Defense Secretary Pete Hegseth had reportedly warned that failure to comply could result in the cancellation of Anthropic’s contract and its designation as a “supply chain risk” — a label typically applied to foreign adversaries and one that could jeopardise critical partnerships.

Trump did not formally apply that designation but cautioned that Anthropic could face “major civil and criminal consequences” if it fails to cooperate during the transition period.

The move is likely to benefit rivals including xAI, founded by Elon Musk, whose chatbot Grok is expected to gain access to classified military networks. Other competitors such as OpenAI and Google also hold contracts to supply AI tools to the US military.

In a surprise development, OpenAI CEO Sam Altman publicly expressed support for Anthropic’s stance, saying he largely trusted the company’s commitment to safety and questioned the Pentagon’s “threatening” approach.

The dispute has sparked wider debate in Silicon Valley over the ethical boundaries of AI deployment in defence settings. Some employees from OpenAI and Google issued an open letter criticising what they described as attempts to pressure companies into compliance by playing them against one another.

Meanwhile, Musk backed the administration, writing on social media that “Anthropic hates Western Civilization.”

Concerns have also been raised on Capitol Hill. Senator Mark Warner said the decision, combined with inflammatory rhetoric, raised questions about whether national security policy was being shaped by political considerations rather than careful analysis.

Retired Air Force General Jack Shanahan, a former leader of the Pentagon’s AI initiatives, said large language models such as Claude were not yet ready for fully autonomous national security applications and described Anthropic’s safeguards as reasonable.

Anthropic, founded in 2021 by Amodei and former OpenAI colleagues, has emerged as one of the world’s most valuable AI startups. The outcome of its standoff with the Pentagon is likely to shape the future relationship between the US government and private AI developers.