Making the move from Splunk to Elastic SIEM is a big decision. Firms do not make this move simply because they are bored. They move because something has shifted. Licensing costs crept up. Data volumes grew faster than expected. Business units demanded broader log coverage. Or the security team simply wanted more flexibility in how data is stored and queried.

A migration of this scale is rarely technical alone. It touches operations, detection logic, compliance, infrastructure, and people. A neat project plan on paper does not survive first contact with production systems.

This Splunk to Elastic SIEM checklist reflects the practical considerations that surface mid-project, not just the ones that look tidy in a planning document.

Why Migrations Get Complicated

Splunk and Elastic solve similar problems, but they approach them differently.

Splunk is built around indexed data and Search Processing Language. Elastic SIEM sits within the Elastic Stack, built on Elasticsearch, with a different query structure and pipeline philosophy. On the surface, both ingest logs, correlate events, and generate alerts. Underneath, assumptions differ.

Detection rules behave differently. Data models are structured differently. Storage economics differ. Query performance changes depending on shard configuration. Even analyst workflows shift slightly.

Ignoring those differences lead to friction later.

Step One: Establish Why the Move is Happening

Before any technical work begins, the reason for change needs to be clear and written down.

Cost reduction is valid. So is scalability. So is architectural alignment with an existing Elastic environment. But vague motivations like “modernisation” cause drift during execution.

Clarity here affects design decisions later. If cost is the driver, storage tiering and retention planning take priority. If performance and flexibility matter more, architecture decisions change.

Without this anchor, the migration becomes a feature comparison exercise rather than a controlled transition.

Step Two: Audit the Current Splunk Estate

It is common to discover more complexity than expected.

Over time, Splunk environments accumulate:

- Custom dashboards that no one documented

- Ad hoc search queries used only by specific analysts

- Scheduled reports that feed compliance evidence

- Correlation searches that trigger tickets in downstream systems

- Apps and add-ons handling parsing logic

Some of these are critical. Some are historical leftovers. Differentiating between the two is harder than it sounds.

Every saved search, alert, lookup, data model and integration should be catalogued for mapping and risk reduction.

Moving to Elastic without understanding what is actively relied upon results in gaps that only appear after the cutover.

Step Three: Map Data Sources and Parsing Logic

Data ingestion is rarely clean.

Splunk often uses heavy forwarders, indexers and specific field extraction logic. Elastic relies on Beats, Elastic Agent, Logstash, and ingest pipelines. Field naming conventions differ. Timestamps may be handled differently. Even basic things such as host identification can vary.

If logs are not normalised properly in Elastic, detection rules will fail quietly.

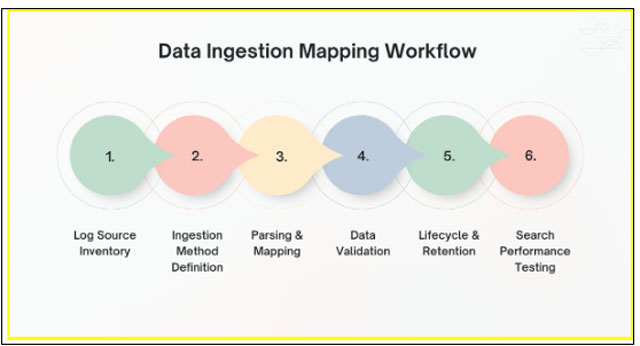

Before diving into configuration detail, the sequence matters.

- Inventory existing log sources

- Define ingestion method in Elastic

- Configure parsing and field mapping

- Validate data accuracy and completeness

- Confirm index lifecycle and retention settings

- Test search performance and query compatibility

Each step depends on the previous one. Skipping validation in step four often causes confusion later when analysts report “missing” events that were actually misparsed.

This is where the Splunk to Elastic SIEM checklist becomes more than documentation. It becomes a guardrail.

Step Four: Rebuild Detection Logic Carefully

Detection engineering is not copy and paste work.

Splunk SPL queries do not translate directly into Elastic Query DSL or KQL. The logic may appear similar but small syntactic differences change behaviour.

Correlation searches in Splunk often rely on specific index naming conventions or summary indexing. Elastic may require rule recreation within its detection engine, along with different threshold configurations.

Blindly porting queries introduces subtle logic errors.

A safer approach involves:

- Reviewing each high value detection rule

- Rewriting logic in native Elastic syntax

- Testing against historical data

- Comparing output with original Splunk alerts

False positives and false negatives both surface during this stage. It is uncomfortable but necessary.

Compliance driven detections deserve particular attention. Missing one regulatory alert because a field was renamed is not a theoretical risk.

Step Five: Address Retention and Storage Economics

One of the main drivers behind migration is cost control. Elastic offers more flexibility in hot, warm, and cold data tiers.

However, assumptions must be tested.

Splunk licensing is often volume based. Elastic shifts the conversation toward infrastructure sizing and storage management. That does not automatically mean cheaper. It means different cost structures.

Retention policies should reflect actual investigative needs, not inherited defaults.

For example, security teams may only actively search ninety days of hot data. Everything beyond that may be archival. Designing this intentionally avoids recreating previous cost problems in a new environment.

Step Six: Validate Integrations and Automation

SIEM platforms rarely operate alone.

Ticketing systems, SOAR tools, email gateways, threat intelligence feeds, vulnerability scanners, and identity providers may all connect to the SIEM.

Each integration requires testing.

- Does alert forwarding still work?

- Do enrichment scripts still populate correctly?

- Are automated response playbooks compatible?

It is easy to focus on core log ingestion while overlooking surrounding automation. The impact only becomes visible when incidents stop flowing into response queues.

Testing should simulate realistic scenarios, not just synthetic events.

Step Seven: Plan a Phased Cutover

Full overnight migration carries risk.

Running both platforms in parallel for a defined period reduces exposure. During this phase:

- Alerts from both systems can be compared

- Analyst feedback can be gathered

- Performance can be monitored under real load

Parallel operation also surfaces operational differences. Analysts may notice query speed improvements. Or they may struggle with new search syntax.

This period is less about technology and more about behaviour change.

A rushed cutover often results in quiet frustration within the SOC.

Step Eight: Train Analysts in Context, Not Theory

Training sessions often focus on features.

What tends to work better is scenario-based familiarisation. Analysts should recreate previous investigations inside Elastic. They should attempt threat hunts using real data. They should intentionally break queries and understand error behaviour.

Muscle memory from Splunk does not disappear overnight. That adjustment period needs space.

Practical exposure reduces resistance more effectively than slide decks.

Common Pitfalls Observed in Real Migrations

Certain patterns appear repeatedly like:

- Underestimating field mapping complexity.

- Overlooking legacy dashboards tied to board reporting.

- Assuming detection accuracy will remain identical without structured validation.

- Failing to benchmark performance before decommissioning Splunk.

Another issue surfaces when security teams underestimate the cultural attachment to existing tooling. People trust what they know. Replacing a SIEM is not just a platform decision. It touches identity within the SOC.

These softer factors influence project outcomes more than most technical challenges.

Measuring Success After Migration

Success cannot be defined purely by decommissioning Splunk.

Useful measures include:

- Detection coverage parity or improvement

- Reduction in ingestion cost per gigabyte

- Analyst query performance

- Stability of integrations

- Reduction in operational overhead

If the security team feels slower or less confident, the migration is not complete, regardless of infrastructure savings.

The Splunk to Elastic SIEM checklist should therefore remain a living reference even after cutover.

Conclusion

A SIEM migration is rarely about tools alone. It reflects shifting cost models, architectural preferences and operational maturity. Moving from Splunk to Elastic demands deliberate mapping, patient validation and honest assessment of existing detection logic.

Shortcuts reveal themselves later, usually during incidents.

Careful planning, phased execution and disciplined testing protect detection capability during transition. The process needs technical rigour and operational sensitivity in equal measure.

Organisations approaching this change often benefit from external guidance grounded in real implementation experience. CyberNX can help you navigate the transition thorough assessments of your digital environments to identify opportunities for Elastic Stack services and their implementation.

A well-executed migration should leave the SOC stronger, not simply different.